We heard a lot about AI and machine learning at the Google I/O developers conference keynote last week, but there was one word that didn’t make an appearance on a slide: privacy. Unlike its heavyweight peers, Google didn’t announce changes to the way it tracks and collects your data. If anything, committed to further data collection with things like the Google Duplex project, which uses your phone to make Assistant-powered calls in the real-world.

While Facebook is trying to salvage its image in the wake of the Cambridge Analytica scandal and Apple is positioning privacy as a “fundamental human right,” Google continues to walk a fine line between protecting and profiling our data. Google makes no secret of the importance of data in its machine learning and artificial intelligence projects. While the tools are available to limit it, it doesn’t exactly advertise them either.

Unlike Apple, which puts a strict barrier between their customers and company, Google is up-front about up how much data it uses. Nearly every product and feature Google demonstrated at I/O was the direct result of the way consumers already use its products. Barring a Cambridge Analytica-sized revelation that some foreign entity is misusing it, data collection in all forms is not going to stop at Google. So unless you want to banish the search giant from your life completely (in which case you probably shouldn’t buy an Android phone), you’re going to have to relinquish a certain degree of your privacy.

You may have gotten an e-mail from Google last week about how it is has “improved the way we describe our practices and how we explain the options you have to update, manage, export, and delete your data”. That’s due to the EU’s General Data Protection Regulation that goes into effect on 25 May, and it’s a welcome extension of its transparency. But make no mistake, Google isn’t making any real changes to its privacy practices. Rather, it’s shifting the onus from the company to the user – presenting a tough choice in the process.

Privacy as a right and a choice

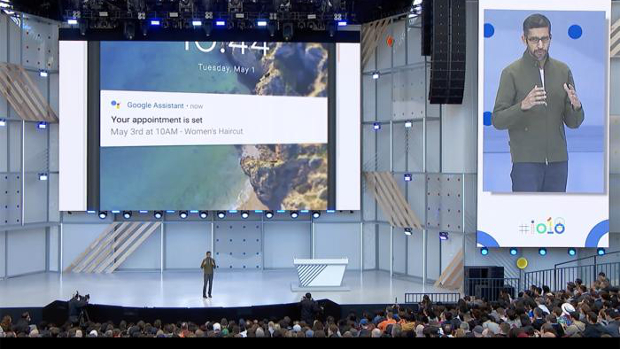

There were audible gasps after the Duplex demo during the I/O keynote, and for good reason. For the first time in a non-scripted Hollywood setting, the crowd witnessed a fully realised AI bot calling a human business, interacting with a live person on the other end, and actually accomplishing a task, in this case making a haircut appointment.

Had Google CEO Sundar Pichai not informed us that it was Google Assistant making the call, barely anyone in the audience would have known. Assistant had actual speech patterns and verbal tics. It responded to questions asked by the person on the other end. It exchanged pleasantries. In short, it had a real, human conversation. There’s nothing even close to that level of AI coming out of Cupertino, and I doubt Apple’s WWDC developer conference in June will change that.

Putting aside the potential for misuse, Duplex wouldn’t be possible without the data Google collects from its users. But it also requires a loose privacy stance on behalf of the user to operate. For one, it needs location sharing turned on so it can find a number to dial. It needs access to your calendar to check for any conflicts. It’s representing you in the real world. And most importantly, it’s learning intimate details about your personal life, and even something as seemingly innocuous as how often you cut your hair is valuable.

As it stands, these are permission toggles that most users wouldn’t consider turning off. They help make things like Maps and Google Assistant work so well, and of course they’re not just limited to Android phones. Apple asks its users to turn on location sharing and permissions on iPhones as well, but the difference there is that most of the data doesn’t leave your device. The parts that do are so encrypted that it’s almost impossible to trace it back to any one user. Google doesn’t make the same promise.

As AI becomes more prevalent and mature, more users are going to look for ways to limit how much Google can see. But while they’ll find surprisingly robust privacy options for your Android phone and Google account, but the price of flipping those toggles might be too high.

Data-driven value

Because data collection is central to Google’s AI efforts, things don’t work nearly as well without Web and app activity and location history turned on. In fact, some things don’t work at all when you try to lock down your account. Turn off location services, and Maps doesn’t launch. Flip off the Voice and Audio Activity controls, and say goodbye to Assistant.

So Google is offering a very difficult choice. Where Apple has basically decided that privacy is more important than powerful AI for its billion-plus users, Google has taken a different tack. If you forgo its best-in-class AI and machine learning, you’ll alter the experience considerably. Don’t like Gmail scanning your e-mails to provide smart suggestions? Fine, don’t turn it on. Don’t like personalised restaurant recommendations? Change your location settings. But you won’t be getting the best possible Google experience.

This is part of Google’s strategic privacy play. The onus is on the user to find and implement their own privacy controls. Privacy on a Google device can be as strong as it is on an Apple one, but Google sets up its model to steer users toward temporary privacy locks – things like incognito mode on Chrome and Do not disturb – rather than the nuclear toggles on your Activity page. By typing Location History to Assistant, for example, Google is making the choice to turn off one of the most invasive trackers nearly impossible to make.

A question of responsibility

Google might not have mentioned privacy at its developers conference, but it’s still a major part of its policy. For a large part of the show, the concept of Digital Wellbeing was touted as a way to unplug from your phone. That’s the main crux of Google’s privacy stance: You decide your own level of involvement.

Nowhere is that better illustrated than in Android P. In addition to the Android Dashboard that lets you set limits on app usage and desaturates the screen when it’s time for bed, there’s also a Lockdown option. Lockdown protects your phone from prying eyes by disabling Smart Lock, fingerprint unlocking, and lock-screen notifications. It’s an extreme option for sure, but for Google, it’s no more extreme than turning off location services or activity. For everything to work, you need to trust Google to keep your data safe, and Google needs to trust its users to make the right choices with their privacy.

Apple has already made that decision for you. With Google, the choice is up to the user. But the decision might not be so easy.

IDG News Service

Subscribers 0

Fans 0

Followers 0

Followers