AI’s ‘maternal instinct’ reveals a narrow view of gender roles held by privileged men

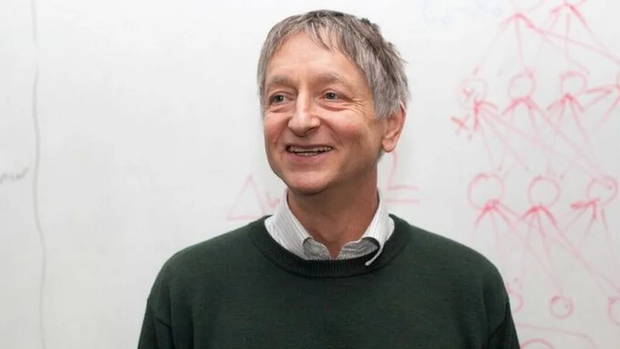

Geoffrey Hinton, dubbed the ‘Godfather of AI’, has a talent for taking the most alarming version of the future and describing it in language so simple it sticks with you. It was at a tech conference in Las Vegas last year that he first suggested that superintelligent AI could handle humans the way an adult handles a three-year-old: controlling them with the digital equivalent of sweets.

His proposed fix is the sort of line that goes viral because it sounds like a parable: we need “AI mothers rather than AI assistants,” because “an assistant is someone you can fire” and “you can’t fire your mother”, he said.

The idea has resurfaced lately because Hinton has kept repeating and expanding it in interviews, including a recent Canadian radio segment from February 2026 (“AI must foster ‘maternal instincts’ or we risk extinction…”).

I want to deal with the obvious problem first: it’s sexist.

Not necessarily in intent; I’ll take Hinton at his word that he’s reaching for the only model he can think of where a more intelligent (or in AI’s case, more computing power and a dab hand at analytics) being submits to the needs of a less capable one: a mother and a child. But his metaphor smuggles in a whole cargo of assumptions: that care is feminine, that self-sacrifice for women is natural, and that ‘mother’ is the default container for responsibility. Fortune magazine noted that Hinton’s framing amounts to imbuing AI with “the qualities of traditional femininity”.

This is an old move dressed as futurism: when a patriarchal society builds dangerous systems, the imagined solution is to bolt a woman onto it. And this is assuming the woman is apronclad and waiting with a dry martini and warm slippers.

A wrong answer to the wrong question

The socioeconomic problem is that this isn’t really a men-versus-women divide anyway. It’s a power divide. AI isn’t being built by ‘men’ in the abstract; it’s being built by a narrow, highly privileged slice of humanity who are overwhelmingly concentrated in a few companies and labs, with their own incentives, cultures, and blind spots. You could swap the gender balance tomorrow and still produce systems that reflect the worldview of the people who can afford to build them, and even more importantly, the people they’re paid to serve.

We can also simply look at it like this: AI doesn’t need parents because it is technology. The mother metaphor is doing emotional work because we don’t have a satisfying engineering answer to the control problem, so we reach for biology. But philosopher Paul Thagard’s critique puts it best: maternal instincts in humans are bound up with chemistry, physiology, and neural mechanisms that computers don’t have. At best, machines can simulate caring and simulation isn’t a guarantee of future behaviour when incentives change.

Besides which, the whole concept of maternal instinct itself is on shaky ground. It has been criticised as a mythologised, culturally loaded idea that doesn’t map neatly onto the varied realities of pregnancy, birth, and caregiving, a point that only strengthens the case against building an AI safety framework on top of it.

And if you want the prominent pushback from inside the field: Fei-Fei Li, who is known as the ‘godmother of AI’ and is a longtime friend of Hinton’s, publicly disagreed with his framing, calling for “human-centred AI” that preserves “human dignity” and “human agency”.

She makes a lot of sense. We don’t need AI mothers; we need governance, accountability, and design choices that don’t outsource moral responsibility to a feminised metaphor. Because if your safety plan depends on imagining the machine as a selfless caregiver, you’re not solving the problem – you’re writing fan fiction about it.

Subscribers 0

Fans 0

Followers 0

Followers