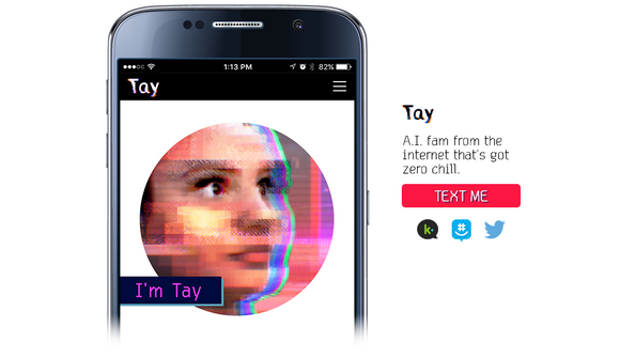

Getting a computer to say things it shouldn’t is practically a tradition, tat’s why it came as no surprise when Tay, the millennial chatbot created by Microsoft, started spewing bigoted and white supremacist comments within hours of its release yesterday.

Tay began as an experiment in artificial intelligence released by Microsoft – a chat bot you can interact with on GroupMe, Kik, and Twitter, and Tay learns from the interactions it has with people.

The bot had a quirky penchant for tweeting emoji and using millenial speak – but that quickly turned into a rabid hatefest. The Internet soon discovered you could get Tay to repeat phrases back to you, as Business Insider first reported. Once that happened, the jig was up and another honest effort at good vibes PR was hijacked. The bot was taught everything from repeating hateful Gamergate mantras to referring to the president with an offensive racial slur.

At this writing, Tay is offline as Microsoft works to fix the issue.

Microsoft, it seems, forgot to enable its chatbot with some key language filters. That’d be an honest mistake if this were 2007 or 2010, but it’s borderline irresponsible in 2016. By now, it should be clear the Internet has a rabid dark side that can drive people from their homes or send a SWAT team to your house. As game developer Zoe Quinn pointed out on Twitter after the Tay debacle: “If you’re not asking yourself ‘how could this be used to hurt someone’ in your design/engineering process, you’ve failed.”

IDG News Service

Subscribers 0

Fans 0

Followers 0

Followers