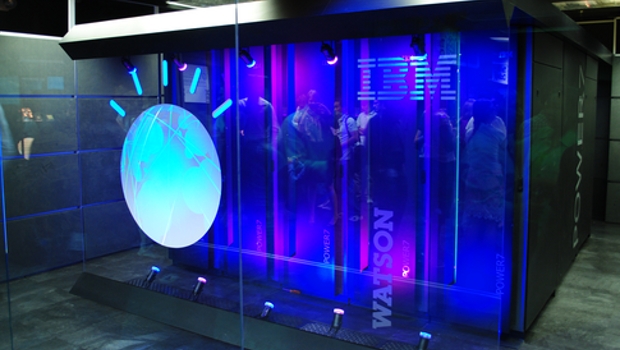

IBM has revamped and restructured its services division to provide greater emphasis on its Watson platform and artificial intelligence.

IBM has been retrenching around Watson, a series of cognitive applications and AI applications in one coherent platform, for the last few years as traditional sales of mainframe hardware and software continue to dry up.

Bart van den Daele, general manager of IBM Global Technology Services in Europe, told Bloomberg that the new AI-centric services will help IBM’s customers minimise disruptions such as server outages or other malfunctions by predicting problems before they occur and taking corrective action, such as adding cloud capacity or rerouting network traffic around bottlenecks.

Van den Daele said the company hopes this restructuring will help it maintain its market share in IT network infrastructure management. “We are focused on driving the next level of innovation in infrastructure,” he told Bloomberg.

The system also has the ability to understand IT help desk queries using natural language. IBM trained Watson by feeding it data from more than 10 million past incidents, Van den Daele said. The system is now handling more than 800,000 incidents a month.

Van den Daele said one large food services distributor, which he could not name due to customer confidentiality, had been able to reduce critical issues across a network of 4,000 servers by 89%, and it had reduced the time needed to resolve the remaining issues from 19 hours before the AI-based system to just 28 minutes after Ai was introduced.

AI for data centre monitoring seems to be increasingly popular. HPE’s Nimble Storage product line has its own error detection AI as well built into its SSDs. Since the storage system touches every part of a data centre network, it is the ideal place to put AI processing to detect impending failures, slowdowns or bottlenecks. It then alerts the administrator to the problem and attempts to fix the problem as well.

Right now, most data centre dashboards tell you of impending failure right when it is close to happening. You get an alert that a hard drive is dying when it is about ready to die, even though the signs may have been there much sooner. Or you learn of network congestion after the pipes get clogged. AI is attempting to find these problems sooner and to fix them automatically, rather than flagging a human to fix the problem. After all, if this happens at 04:00, there might not be anyone around to fix it.

IDG News Service

Subscribers 0

Fans 0

Followers 0

Followers